Generative AI and the question of authorship

The issue of whether an artificial intelligence (AI) platform or its programmer(s) should, or can, be credited with the creation of an Intellectual Property (IP) asset has been hotly debated over the last couple of years. But if recent events are any indicator, the world of AI can be transformed in just a couple of months. Will the IP system be able to keep up?

Join our webinar to discover the cutting-edge world of legal technology and learn about the potential of artificial intelligence to revolutionize the IP industry.

Patent offices in the United States, European Union, United Kingdom and elsewhere have already determined that an AI tool cannot hold inventorship: Only natural persons can be inventors. However, jurisdictions with no substantive patent examination procedures, such as South Africa, have allowed the submission of applications naming an AI as the sole inventor (but not as an assignee). Meanwhile, Australia, which has both a formal and substantive examination, has taken a more expansive interpretation of what an "inventor" can be, holding that non-human entities can fill the role:

"Computer", "controller", "regulator", "distributor", "collector", "lawnmower" and "dishwasher" are all agent nouns. As each example demonstrates, the agent can be a person or a thing. Accordingly, if an artificial intelligence system is the agent which invents, it can be described as an "inventor".

But with generative AI that produces entirely new creative works, the issue has yet to be addressed at this juridical level. Even if it is settled in a precedent-creating legal dispute sometime in the near future, the question of authorship is not going anywhere, especially given the rapidly increasing sophistication of these programs.

How generative AI "creates"

To better understand the question of non-human authorship, we need to explore the basic methodology of generative AI platforms.

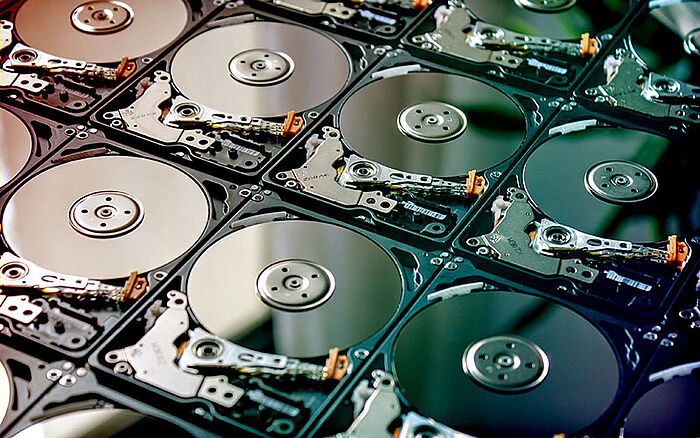

Generally speaking, these tools utilize a subset of AI technology called machine learning (ML). In this field, engineers create algorithmic models and "train" them using vast amounts of pre-existing data to recognize patterns. The order of magnitude is breathtaking: not gigabytes, more like petabytes and exabytes. This is because the model needs as many examples as possible upon which to base its "creations" — millions, perhaps even billions of documents, image-text pairings and so on.

One petabyte is the equivalent of 1024 terabytes, and 1024 petabytes equal one exabyte. To put that into perspective, to store one exabyte of data, you would need more than 120,000 hard drives - or around 763 billion floppy disks.

Over time and with exposure, the model learns how to differentiate the samples it has access to using the parameters defined by the programmer, meaning it can then be instructed to generate new text or imagery based on user prompts. In this way, image generation tools like DALL-E, Craiyon and Midjourney produce "unique" works that may be drawn from dozens or hundreds of example photographs, drawings, digital art, paintings and other visuals.

Similarly, a text generator or online translator tool has access to vast swaths of information sourced from public records, digitized books, Wikipedia entries, blogs, news articles and more. There are also video- and music-generating AI platforms, including Synthesia, Luma, ElevenLabs and Supertone, though these tools have not been as big a part of the generative AI conversation as ChatGPT and DALL-E.

At the threshold of infringement

The question of whether an AI-generated textual, visual or auditory work constitutes copyright or trademark infringement is sometimes easily answered, sometimes not. For example, if an AI-generated image is created with datasets entirely consisting of internally owned or licensed works — such as from royalty-free stock image libraries — it is unlikely to be considered infringement.

However, if the datasets include copyrighted imagery or depictions of trademarked IP (which they almost certainly do), the case for an infringement claim becomes much stronger. The burden of proof would fall upon the alleged infringer, that is, the developer who gathered and input the protected material, to prove they did not violate any IP rights.

By the same logic that a generative AI cannot own IP rights, it also cannot be liable for infringing them. Ongoing and future court cases will need to establish which actions are acceptable and which are out of bounds for developers and end users.

The picture grows murkier, however, when user-generated content is brought into the mix. Social media sites like Tumblr and Facebook or art-specific platforms like DeviantArt operate their own licensing terms that may or may not afford them the commercial right to exploit works hosted on them.

But business, as much as nature, abhors a vacuum, and so numerous IP lawsuits are making their way through the courts that together have the potential to close the gap in case law concerning the training of generative AIs. Image generator Stability AI currently finds itself on the receiving end of several suits, notably from Getty Images and various artists. Earlier this year, Sarah Andersen, Kelly McKernan and Karla Ortiz filed a class-action lawsuit against Stability AI and two other platforms: Midjourney and DeviantArt, the developer of an image generator called DreamUp. They claim that one in every 50 images (or about 3.3 million samples) within the training dataset, LAION-Aesthetics, came from DeviantArt – without the permission of the contributing artists.

Fair or unfair use? Transformation or imitation?

A spokesperson for Stability AI commented that its image-generation process fell under fair-use guidelines. Variously titled depending on the jurisdiction, fair use or fair dealing provisions allow certain unauthorized uses of copyrighted material. These vary considerably, but for the most part, the purposes of commentary, critique and education are exempted from infringing copyrights. Not-for-profit usages (that do not commercially damage the copyright owner) and "transformative" derivations are also typically permissible.

Works in the public domain are available for all to use, imitate, modify and share. Copyrighted works, on the other hand, may be modified without the IP right holder's permission if these changes are considered artistically substantial and do not injure the owner.

Many generative AIs are commercial enterprises, so a fair-use defense could conceivably rest on the "transformative" nature of the resulting works. That said, it is commonly the input material that copyrighted owners take the most umbrage with, i.e., training the AI on protected works is the alleged unfair use.

And the issue is not confined to the visual and written arts. In a recent, bizarre incident, an individual known as "Ghostwriter" posted a song titled "Heart on My Sleeve" on Spotify, YouTube and TikTok. The viral hit claimed to use AI-generated voice simulations of Drake and The Weeknd, prompting rights owner Universal Music Group to petition for its removal. Being available for only six days, the song was deleted from monetizable platforms before it generated significant revenue. Of course, in accordance with the Streisand effect, unofficial mirrors on YouTube will linger for much longer.

The outcomes of current and near-future litigation will surely have tremendous precedential weight, and IP experts will be watching very closely. It is possible that definitions of authorship may have to adapt to include – or exclude – generative AIs. No matter what, the IP law experts at Dennemeyer & Associates are passionate about defending IP rights and will always work to protect genuine inventors and creators.

Filed in

Explore how fragmented IP services create barriers to value, and why connecting expertise across the IP life cycle leads to better outcomes for every asset.